Letting go and holding on: AI and Me

There is a particular kind of anxiety that doesn’t announce itself as fear. It arrives as a vague sense that everyone else has sorted something out and you haven’t. I’ve seen it when the subject of using AI comes up in discussions with colleagues like researchers, educators, actors. These are all people with decades of developed expertise in their fields. They nod carefully or change the subject or say with a precision that suggests it’s been rehearsed, that they haven’t really looked into it yet. Others are frankly dismissive or brush aside any suggestion that there is a better way to research and write than the one they have always used. And yes, others are fearful of AI and its negative press. I remember all of this from the early 90s when I would speak with colleagues about using the web.

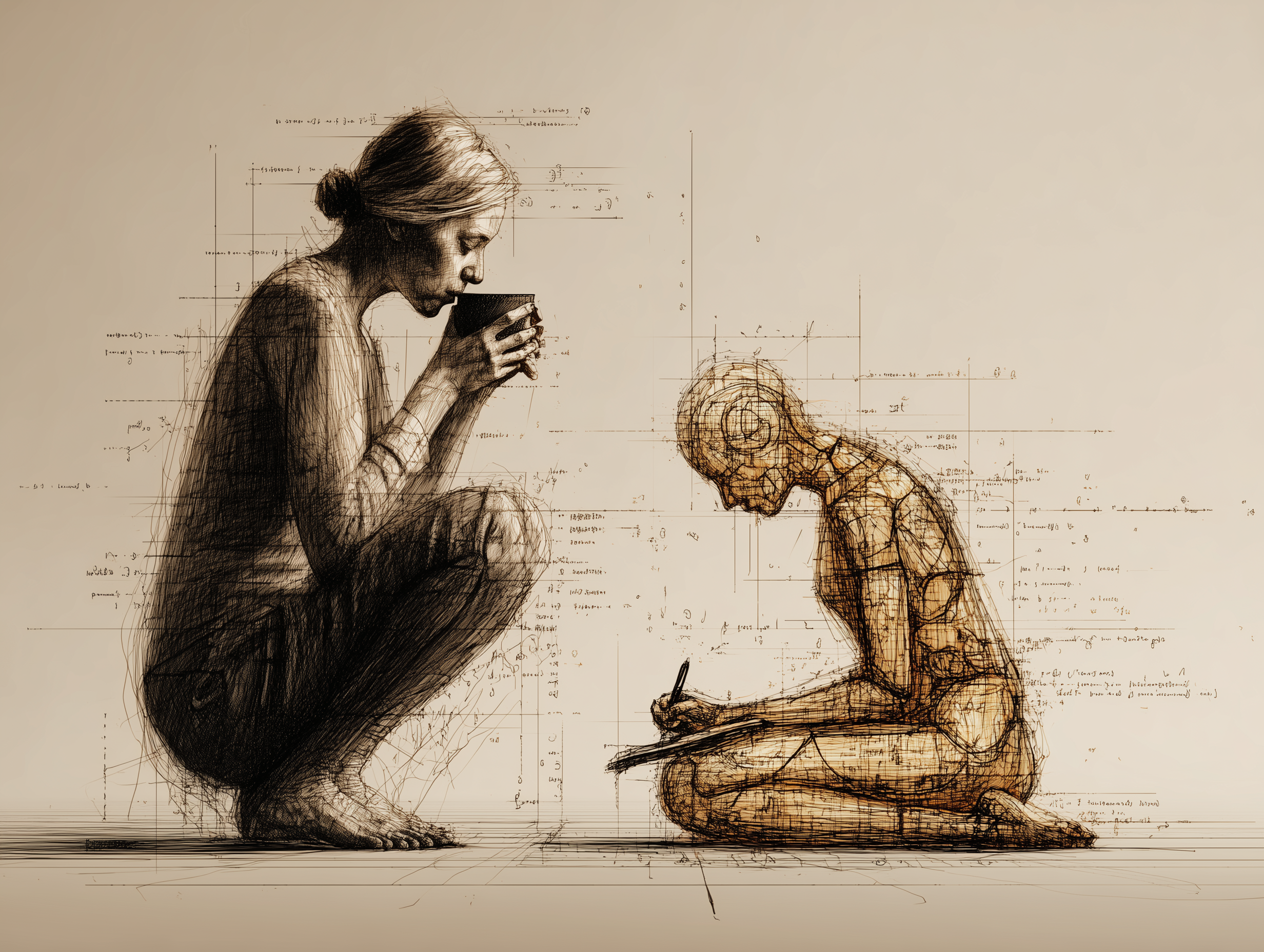

When it comes to using AI in a professional workflow I recognise the feeling because I had it too. And I want to write about how I moved through it, not because I’ve arrived anywhere definitively, but because the journey itself turns out to be the point. Let’s face it, the journey is getting more complex and moving faster by the day. Just keeping up is a challenge in itself. But to the point I want to make.

The thing nobody tells you about experimenting

For a while, I did what most curious people do: I tried other people’s systems. I read the tutorials, followed the workflows and implemented the frameworks. Some of them were genuinely ingenious. Most of them were either too complex for what I needed, or built for someone whose work looked nothing like mine; the default in these systems are overwhelmingly business-oriented. A few were so elaborately optimised that using them felt like driving a race car to the supermarket.

What I eventually understood is that no one else’s workflow is going to fit you precisely, because no one else has your particular combination of materials, expertise, habits, and purpose. The experimentation wasn’t wasted because it taught me what I didn’t need, however the real learning only started when I stopped trying to implement others’ processes (workflows and pipelines are the jargon here) and started trying to understand how these amazing AI tools and systems actually worked.

What “the agent knows my materials” actually means

The shift that changed everything for me was moving from using AI tools to having an AI process that knows my materials. Those two things sound similar. They aren’t.

Using tools means opening an application and asking it something. You’ve undoubtedly done this with something like ChatGPT or Claude or one of the other AI apps out there. They feel like a polished Google search. Just like a Google search, the application has no context for who you are, what you’ve already thought, or what you’ve spent years accumulating. It works from scratch every time, and so, in a way, do you.

Having a process that knows your materials means the AI is working from your existing thinking: your notes, your research, your synthesis documents, your developed knowledge base. When I prepare a lecture now, I’m not asking AI to generate something from nothing. I’m asking it to work with what I have already gathered and assessed. The output reflects my expertise, not a generically competent approximation of expertise. This is called “grounded research” in AI territory-speak.

This distinction matters enormously for anyone whose work depends on accumulated knowledge e.g., historians, educators, researchers, anyone whose value to an audience rests on depth rather than speed.

Where I learned to hold on

Here is what I have learned that I cannot hand over.

The argument has to be mine. An AI can organise material brilliantly. It can surface connections across a large body of research, suggest structure, draft fluent prose and in my style. What it cannot do is tell me what the lecture is for i.e., what I want an audience to leave thinking, feeling, or questioning or even who that audience is. That through-line is the lecture. Everything else is in service of it. The moment I’m vague about my argument going in, the output will be been glossy and inert.

My voice has to be mine. This sounds obvious until you realise how easy it is to let the draft drift. AI-assisted prose has a particular quality: confident, well-organised, and faintly generic. I know my own writing well enough to catch it, but it requires active attention, not passive reading. I test everything against a simple question: does this sound like a person talking, or a document being generated? One more pass usually fixes it. I’ve also trained my AI assistant to write by having it analyse samples of my own writing.

And finally, the editorial judgement is mine. What goes in, what comes out, what gets cut are decisions that cannot be delegated. They are the work.

Where I learned to let go

The volume of coverage is the real gain, and I had to learn how to let the AI do it.

Before I developed this workflow, I would research for a lecture by working through my materials methodically, my notes, books, previous drafts. It was thorough, but sequential. I could only check one source at a time. What an AI process gives me is the ability to search across everything simultaneously: my note-taking app of choice is Tana and it is also one of my knowledge bases; my Readwise highlights; my Google Drive documents, NotebookLM sources all in a single session. It surfaces connections I would genuinely have missed, not because I’m not a careful researcher, but because I’m a human one.

The labour of drafting is also something I’ve learned to release. Drafting has always been the stage I find most effortful, i.e., the translation from structured thinking into flowing prose. An AI can produce a working draft from a clear outline in the time it takes me to make another coffee. That draft will need significant work. But starting from a working draft is not the same as starting from a blank page, and the difference in momentum is real.

The checkpoint principle

What keeps me in charge is structure, not willpower. I design my workflow so the AI stops and waits for me before moving to the next stage.

Brief: I confirm the topic, audience, and angle before any research begins.

Sources: I review what’s been found and decide what’s relevant before anything is built from it.

Structure: I reshape the proposed outline before any drafting starts.

Draft: I rewrite, cut, and add before anything is finished.

These four checkpoints are four critical moments where my judgement shapes the outcome. The AI proposes; I dispose. The AI suggests; I make the decisions.

This is not micromanagement of the tool. It is the architecture of a process in which I remain the editor, the researcher, and the thinker and the AI is a very capable assistant who does exactly what I ask and nothing more.

What I’d say to a colleague

If you’re standing where I was, curious but uncertain, perhaps slightly behind where you feel you should be, here is the honest version of what helped me. First, I’m assuming you are using an AI tool of some sort for research. If not, let me tell you there are dozens if not hundreds of articles and videos out there to assist you to learn to use AI for your own purpose. I’m speaking from the standpoint of someone who creates lectures and articles in a particular research field.

Always start with your own materials. The AI is most useful when it’s working with what you already know, not generating what you don’t. Feed it your thinking, not your gaps. Ground your outcome in trusted sources.

Build the pause points in from the beginning. Control is structural. If you design the process around your decision points, you won’t find yourself wondering how you ended up with something that doesn’t sound like you.

Expect to iterate. The workflow you need is not someone else’s workflow, slightly adjusted. It’s yours, built through use and refinement. The experiments that don’t fit are not failures; they’re how you find the edges of what you actually need.

And give yourself credit for what you already know how to do. The skills that make you good at your work i.e., your critical judgement, expertise, and voice are exactly the skills that make AI useful rather than generic. The tool reflects the quality of the person using it. That’s not a warning. It’s an encouragement.

The AI is a very capable collaborator. It just needs you to know what you’re doing.